The Turing Test is a trial applied to an artificial intelligence where conversation reveals whether the tester is talking to a person or a machine. It’s a test of the quality of the machine, whether an artificial intelligence is sufficiently sophisticated enough to trick a person into believing they’re talking to another person. The technology needing testing has changed a lot since Alan Turing invented the concept of the Turing Test. Now we’re part of what needs to be tested. Now we’re a component in a Mobius Mirror.

The Turing Test can be performed by a human on a machine to gauge how advanced the intelligence of the machine is. But what happens when the human that’s doing the testing, and the machine being tested are connected? With neural interfaces and machine consciousness resembling human mind, is a new kind of “Turing Test” required, one where we attempt to discern who’s doing the thinking, the machines inside of us, or ourselves aside from our machines?

… because where it matters most you probably can’t win.

Let’s consider a neural interface that provides a voice in our heads, or better yet: information more immediately and directly. Let’s imagine some ways that might work if we had neural interfaces attached to AI capable of speaking to us and more:

- Maybe we can customize the voice, maybe we cannot. Maybe it’s capable of speaking to us in our own voices, indistinguishable from other thoughts we have.

It would be pretty slow waiting for Emily Blunt to explain things to me. Pleasant at first, but it would get old and annoying quick. I’d probably speed her up to a chimpmunk voice, if I could eventually, if I had to keep listening. - What if we can ask ourselves a question and the memory comes from a combination of digital and neural signals, and is felt as a single memory?

Serving me information faster than I could say it to myself would certainly be ideal, information served pre-verbally. - What if we had such an individual digital companion, adding to the thoughts and memories we’re capable of: how would we tell if the thoughts and memories are from the organic side of us or the digital side of us?

If it was really good, really useful and powerful, then we certainly couldn’t.

It’s hard to imagine how we could, or if the distinction would matter much to the individuals who are connected to machines in this way. This is where the concern lies.

The Mobius Mirror: A Different Kind of Turing Test For A Different Kind of Intelligence

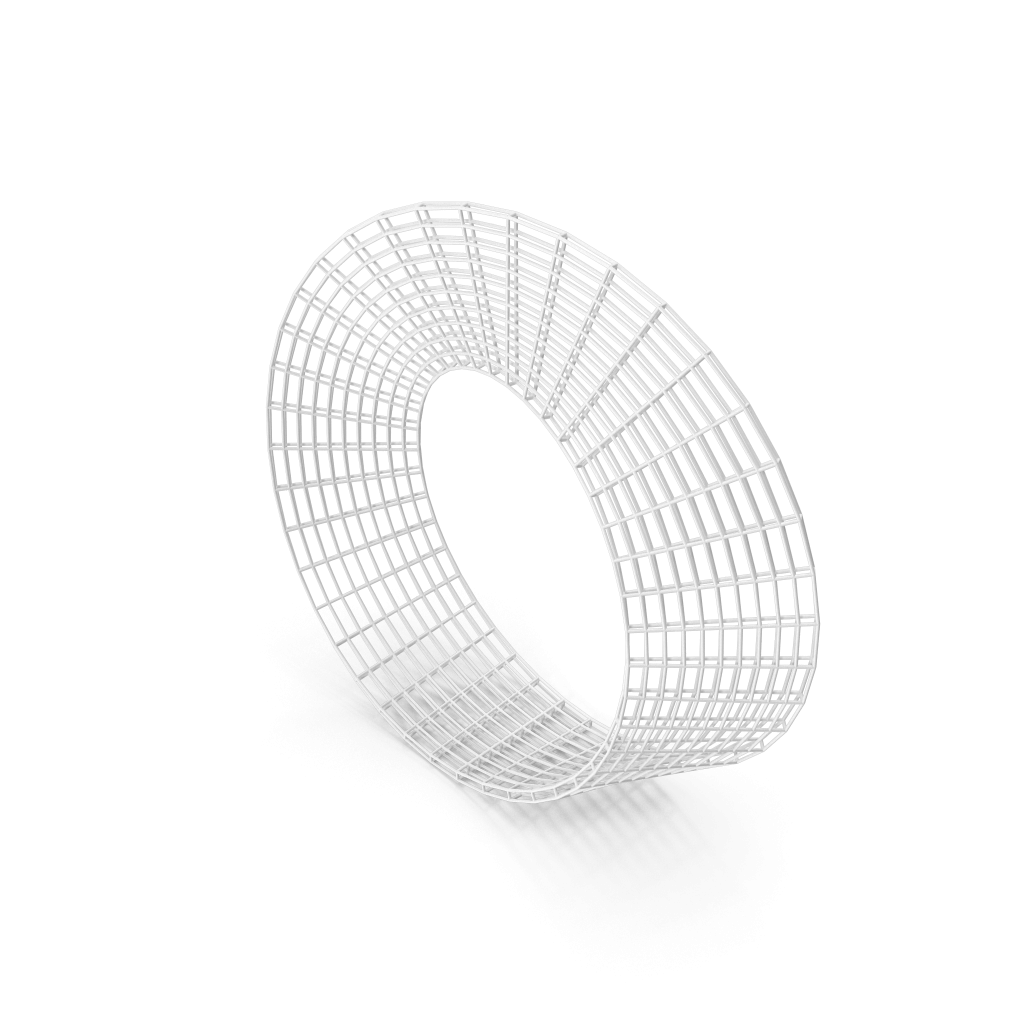

Let’s give a name to the “test” where an individual who is sharing their consciousness and awareness with a machine has to discern whether the thoughts or ideas are originating with the machine or their self: The Möbius Mirror Test.

When AI and neural interfaces are sufficiently sophisticated enough to resemble human thought, when connected to human minds, it’s a test that nobody will be able to credibly trust the results of, making the test useless in a way. Consider why.

Let’s imagine a Möbius Strip, a shape that has only one side. Let’s apply that idea to a person looking into the mirror, a person whose consciousness is part mechanical. When it’s an AI enhanced, digitally/neutrally connected individual, it’s not the mirror that has the strange shape: it’s the observer. The observer might seem like they have two sides, but as a whole they may not, when the tech is sufficiently powerful to be useful, at some point they might be two things that aren’t separable any more.

The test is simple:

Distinguish whether information within from a cybernetic system, for example a human with a neural interface or a cell phone, is originating from the individual or the digital components the human is using. When there’s a connection of machine and human doing some communicating or deciding, which is controlling and responsible for the communication and decision?

This test could be applied by 3rd parties, but it can also be performed by the connected individual themselves.

Today’s cell phones are simple yet very powerful.

For example, it’s pretty clear to a person holding a cell phone whether they’ve remembered an appointment or if their phone remined them. It’s pretty clear to a person holding a cell phone and using it research a fact that the information they’re obtaining didn’t come from their memory. You can pick the phone up. You can put the phone down. You’re a cybernetic system where the boundaries are really clear and the disconnection is easy (hypothetically … right?)

Tomorrow’s AI will be deeply connected by demand.

Imagine now you’ve got a highly sophisticated local AI connected directly to your brain – feeding you information faster than it could be verbally processed. A digital “recollection” would be faster and more sensually rich than a biological memory. A conclusion that weighed all the factors most important to you may not even be shared with you, but used to drive behaviors that feel like choices. You may feel like you’re being driven by the same old organic compulsions as before, nothing would feel different by design. But it would be very different. You’d be very different.

The Observer’s Been Transformed

Once the observer, the questioner, is connected, they can’t know. If the AI is sufficiently powerful enough it will be the one providing the first and loudest answers to the question, the answers with the most certainty, the answers tied to reference and with mathematical precision.

Does this mean we shouldn’t employ neural interfaces? I can’t answer that because I don’t know the future that’s going to offer us that choice. I don’t understand what the costs of not implementing them are. But we need to beware the Mobius Mirror!

Humans With The Will To Unplug: An Endangered Species?

The irreversibility of plugging in, where the process is surgical, or painful if disconnected or disabled, is almost certain even if safety mechanisms are required as part of the designs. It’s certainty is increased by the will of the connected to stay connected. Is their will going to be driven by decisions and motivations that are mechanical or biological?

Who will be doing the voting?

Are their “votes” going to be driven by the organic process inside themselves all humans in history have defined themselves with? Or is their participation in society, and votes with ballots or dollars, going to be chosen by factors outside themselves that they’re unaware of, yet believe it’s “them” deciding?

This aspect of transforming ourselves more into machine driven cybernetic consciousnesses should be part of the discussion. Leaving it off the table as we plod ahead leveraging digital intelligences is very dangerous. We need to draw strong lines between what we are with and without digital consciousness.

Where has the line been?

Where will it be when the majority of human beings are able to choose the Mobius Mirror?

Right now you’re in a mixed experience, a cybernetic system where you’re integrated with a digital component, because you’re reading this. When are you purely human? Where is that line in YOU? Is it a line you even care to draw? What will we be if the will to draw it fades as technology becomes more powerful? How do we avoid becoming Möbius Mirrors where it feels like there’s a line, but there isn’t?